Dedicated interaction concept for each pair of AR smart glasses

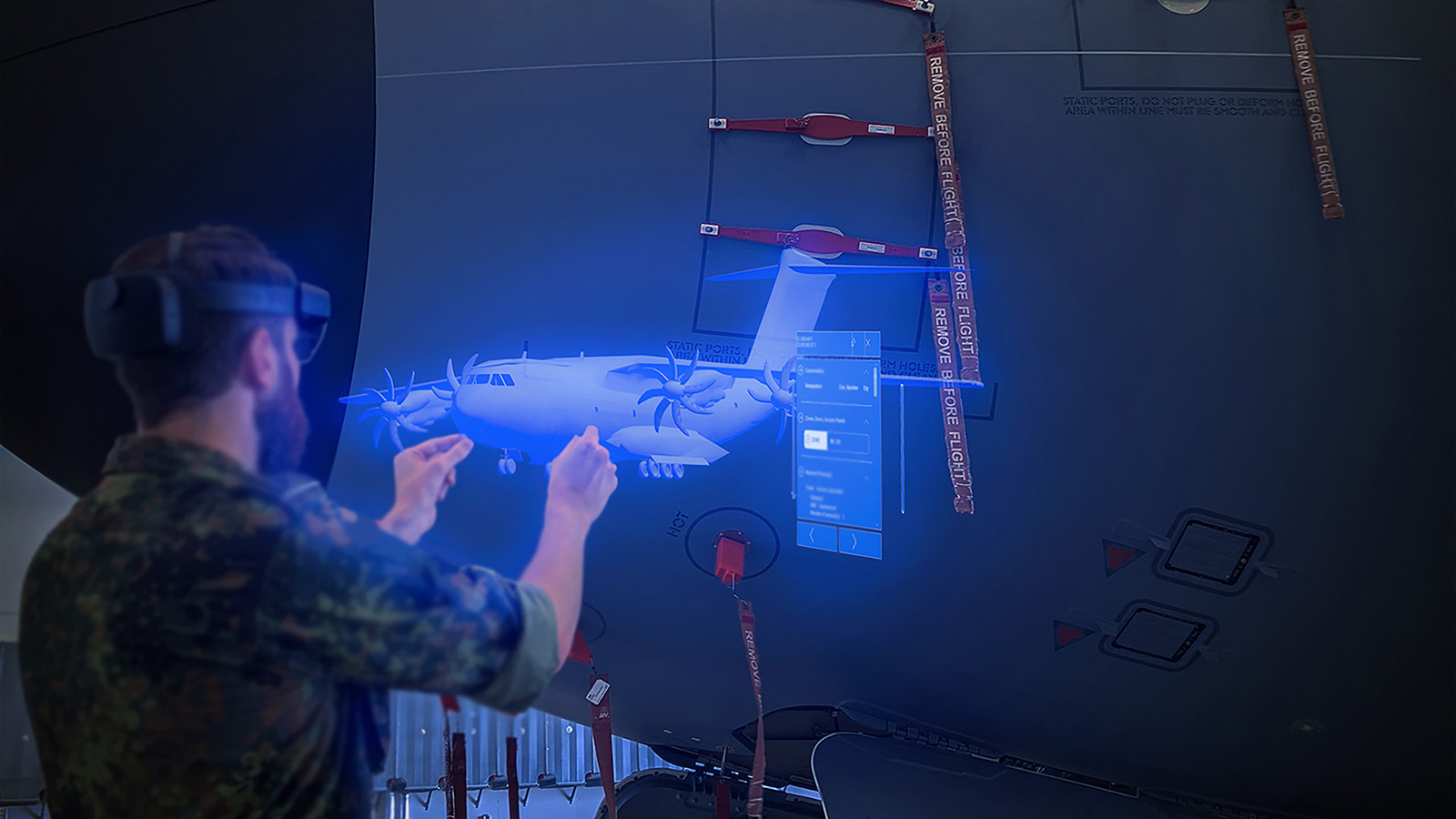

“The concept development included many aspects, such as taking into account the knowledge of domain experts, analyzing the approaches taken by the test persons in the workplace, checking their personal gear and the compatibility thereof with the AR smart glasses, and developing a unique interaction concept for each pair of glasses, based on the various requirements in the categories of use, organization and design,” explains Martin Mundt, a scientist in the ‘Human-Machine Systems’ department, summarizing the course of action. The concepts were also adapted to the greatly contrasting areas of application: The first of these was the cockpit, in which extensive gestural interaction is restricted and where there is also an increased noise level, which influences possible speech recognition. In addition, a concept for a workshop environment was created. With controlled lighting, it was well-suited to the automatic detection of objects. “We expanded the existing regulations, implemented them in 3D and supplemented them with animations visualizing certain steps directly on the virtually displayed component. That could be, for example, measuring the resistance of the battery or special notations on the model of the cockpit,” says the researcher.

Highly versatile interaction elements

The team relied on multimodal input techniques, which allowed the user to interact independently of constraints imposed by the task in question or certain environmental variables. Various interaction elements, which could be operated by way of gaze and gesture control, were designed for this purpose. Various approaches were considered for the avoidance of unwanted input when using gaze control. Ultimately, the decision was made to realize an input by briefly gazing at the interaction element. An indicator (similar to a progress bar) fills up, showing how long the user still has to look at the element in order to trigger it. Alternatively, the user can activate the interaction element by pressing it with their index finger.

Intuitive interaction with smart glasses

Overall, the interaction was rated as “intuitive” by the subjects. Gaze control was praised, and the majority of the testers preferred it to gesture or voice control. The direct overlaying of relevant information during battery maintenance helped to identify specific components. Animations used for additional illustration of assembly tasks were rated positively. On the other hand, there were uncertainties when using gesture control. As a result, in addition to the demo applications, the research team developed a learning environment that used animations to teach users basic gestures and interaction techniques.

Remote maintenance as a further option

The next step is to be able to record and digitize documentation and notes on the status of work. It should also be possible to call in experts. “We want to address remote maintenance tasks that primarily focus on interaction between the participants,” says Mundt. As well as the use cases analyzed, the researchers want to investigate further areas of application, given that other sectors can also benefit from the use of augmented reality for maintenance and repair work. Because of this, the shortage of skilled workers in the energy sector could be compensated to some extent. For example, AR could assist less-experienced technicians with the installation of heat pumps, since the three-dimensional step-by-step instructions also guide beginners safely through the maintenance tasks.